Training for blind locomotion on rough terrain

We leverage Constraints as Terminations to perform a velocity tracking task on rough terrain while satisfying safety and style constraints. Observations include the measured positions and velocities of the 10 lower joints of the robot (5 per leg), the previous action, the waist angular velocity, the gravity vector projected in waist frame, central phases for each leg defined as cosine and sine pairs, and the linear and angular velocity command that the robot must track. Central phases are used to guide leg motion toward a desired gait pattern. The action space consists of the desired joint position offsets with respect to a default joint configuration. The resulting target position is then converted into torques through a low level proportional-derivative controller. We train policies with a curriculum on the terrain difficulty using Isaac Gym.

Training for loco-manipulation

We extend our RL scheme to humanoid loco-manipulation for transporting a package in simulation. Most of the constraints from the blind locomotion are kept and the velocity commands is replaced by observations about the box and the target location.

For simplicity, the robot starts in a position for which the package is placed between its hands. This eases the pick-up procedure compared to more extensive works where the robot has to get into position first.

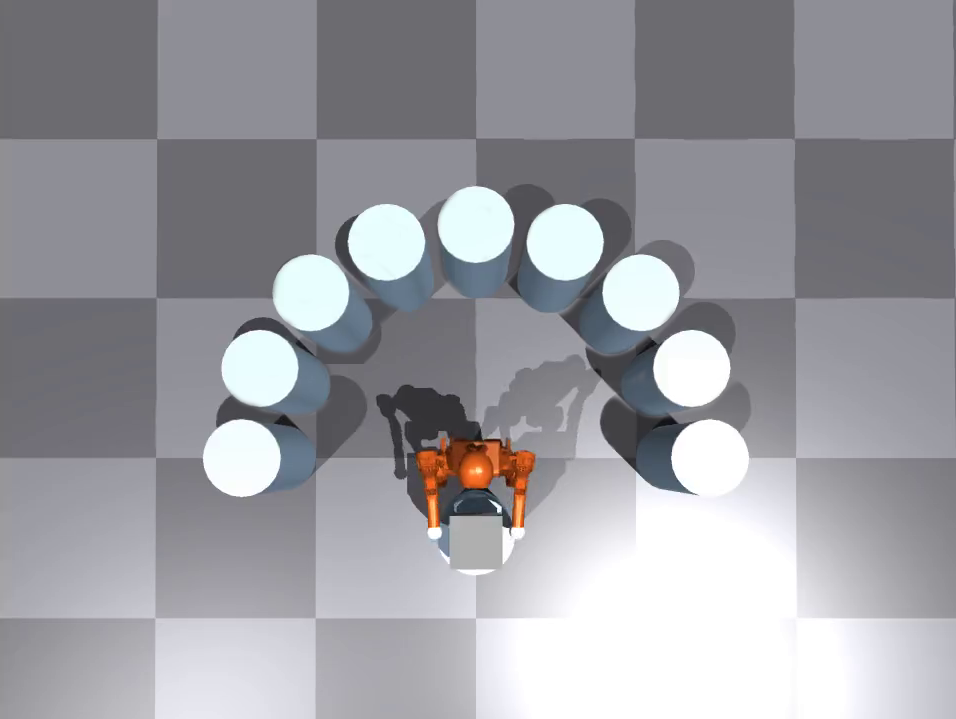

The goal is to release the package atop another 90 cm pillar placed randomly in a half circle behind the robot with a radius of 1.5 m.

Transport over longer distances

The package transport can be seamlessly extended to longer distances even if training was only done for a short one. To do so, we scale down the goal distance in the observations to go back to the training situation. The robot can handle push disturbances even when transporting the box, as seen on the right video in the middle of the trajectory.

Going further, we can also achieve long distance navigation, including turns during transport, by regularly modifying the goal position to guide the robot along a path.

Changing the size of the package

The robot can consistently transport smaller packages as well, here with a 15 centimeters box instead of the default 25 centimeters one. However, although it can transport them, the robot struggles to properly release bigger packages at the goal location as it does not open the arms wide enough.